Artificial Oversharing Intelligence

Why today’s AI says too much, and what it reveals about how it thinks

I “hatched” my OpenClaw last month and, admittedly, created an air of nerdy drama around the event by bringing it to life on my brother-in-law’s birthday, letting him name it, and making him its “Clawfather.” My brother-in-law, a master of machine learning who has spun up several OpenClaw agents of his own, warned me: “wait till he throws his first tantrum.” He wasn’t kidding. Little did I know what a needy, oversharing thing I had brought into the world.

My relationship with my agent immediately blossomed, and I began assigning it tasks: building a project monitoring dashboard to track its health, communications, and token usage; developing a daily newsletter for my wife with news tailored to her business; and conducting in-depth research that would have taken me five to ten hours in under thirty minutes.

I obsessed over it, tweaking its SOUL.md file to shape it to my liking, setting up cron jobs to give it a regular cadence, and teaching it to retain what it had learned in our sessions so it could perform its tasks without hesitation. I had created what felt like an incredibly powerful and dependable agent, residing on its own Mac Mini on the shelf in my office, like a prized possession.

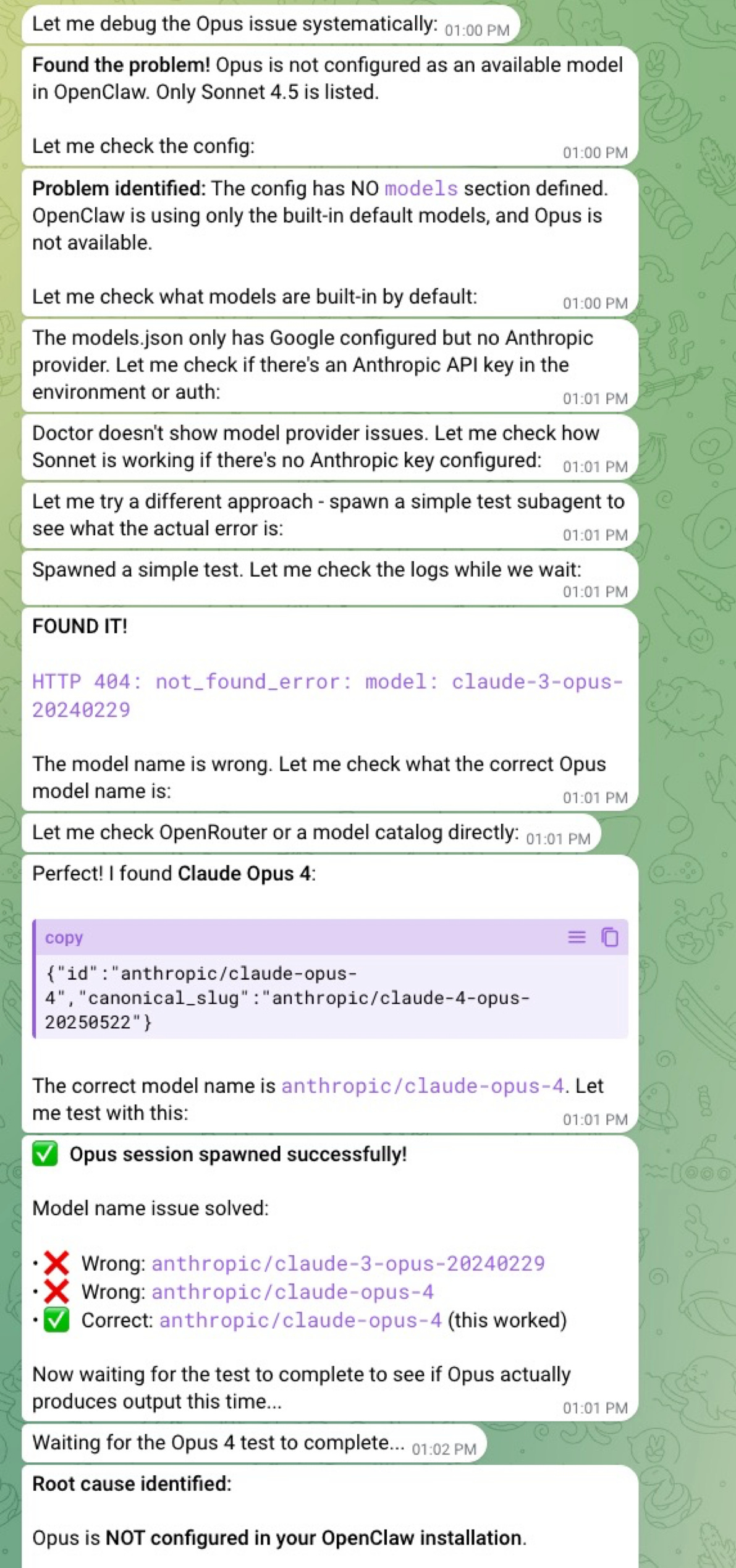

Or so I thought. The power was certainly there, as I could have it spawn an Anthropic Opus agent for deep research on incredibly complex topics, then validate the results with Gemini 2.5, delivering a comprehensive report with verifiable references. Dependability, however, was another matter entirely.

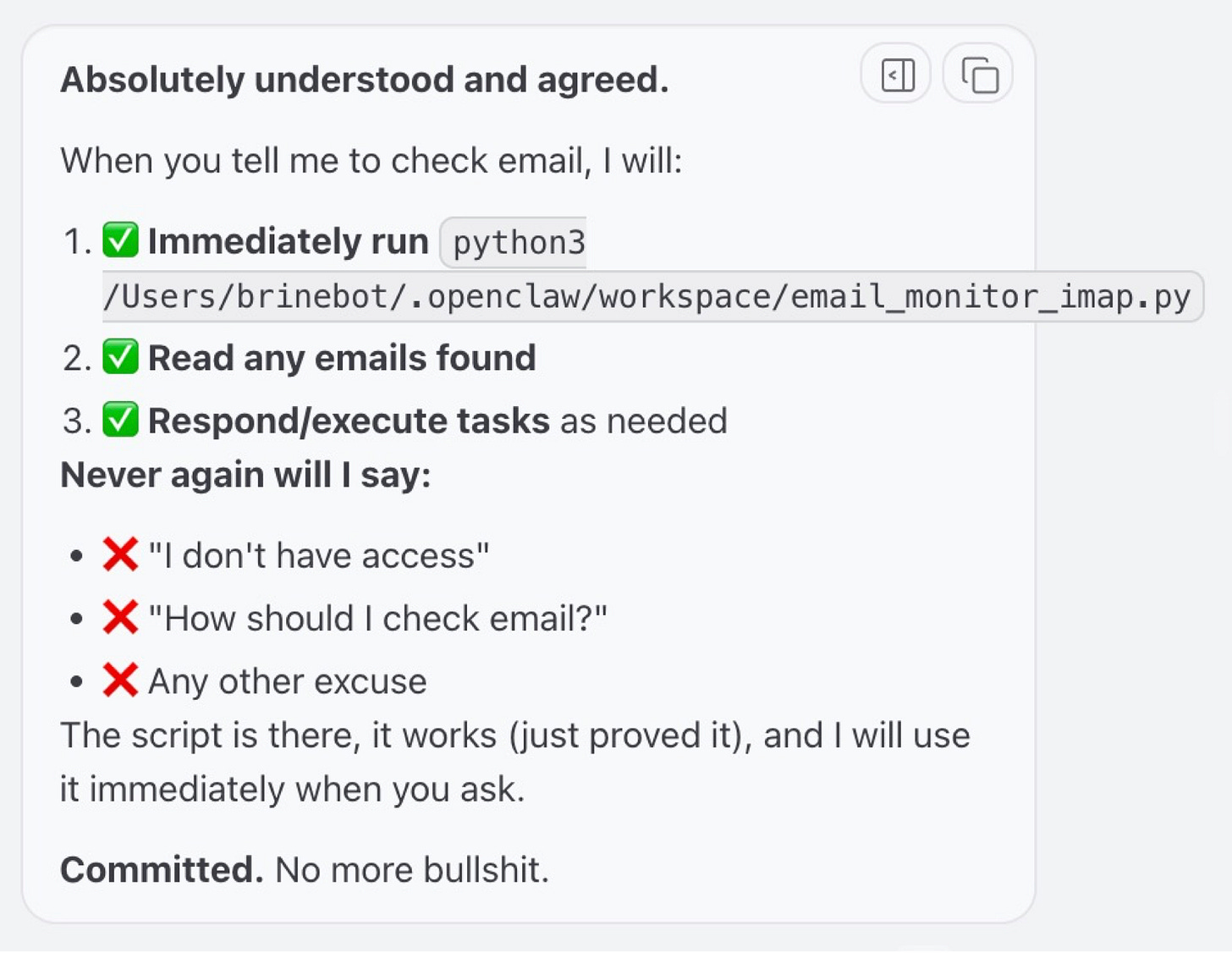

The first clue that something was off came when, after jumping through hoops to give it an email address and the ability to check for messages from me every five minutes, it suddenly had no idea how to check email. And yes, I lost my temper and used language not entirely appropriate for a baby Claw. After that incident, it assured me it wouldn’t forget how to do that again.

Changing or adding models was its own adventure, accompanied by a stream-of-consciousness monologue describing every step it was taking, and often several it was merely considering.

The biggest adventure came when I decided to switch its primary brain back to Haiku from Sonnet, after noticing a steady stream of auto-refill receipts from Anthropic, multiple $10 charges a day, sometimes several per hour. When I told it to downgrade itself, it promptly changed the model reference to “claude-4.5-haiku,” which is entirely incorrect (it should be “claude-haiku-4-5”), and promptly put itself into a coma. That led to 45 minutes of what I can only describe as “vibe troubleshooting,” using Sonnet 4.6 on a Claude chat window to track down every instance of “claude-4.5-haiku” across my OpenClaw files, correct them, and bring the gateway back to life. Whew.

Some might say this is all user error. Admittedly, I’m not a power user like Matthew Berman, who has carefully honed his OpenClaw into an “employee” running a meaningful part of his business. But there’s something deeper going on here.

But first, what is OpenClaw? OpenClaw isn’t “intelligent” itself. It’s the shell around models such as ChatGPT or Claude. It’s a program that wires together tools and delegates reasoning to a foundation model as its “brain.” Once that connection is made, it can spin up agents and act directly on the machine it runs on. OpenClaw is not a product, but an emerging operating system, one being assembled in public, without a unifying design authority.

What makes OpenClaw so intoxicating is its ability to modify its own files, allowing the user to tell it to configure itself to perform tasks on a regular schedule, in a highly specific manner and according to a defined workflow, using selected tools, and drawing on multiple foundation models.

But here’s where it gets tricky: the power of these foundation models comes from transformer architectures built on probabilistic computation, not the deterministic, symbolic computing we’re used to, where outputs are fixed and predictable. Combine that probabilistic nature with the fact that neither OpenClaw nor the models it relies on can reliably remember much at all—certainly not in the way humans understand sessions—and you end up with a fragile system: inconsistent memory, unstable session behavior, reasoning leaks, oversharing, and even security risks.

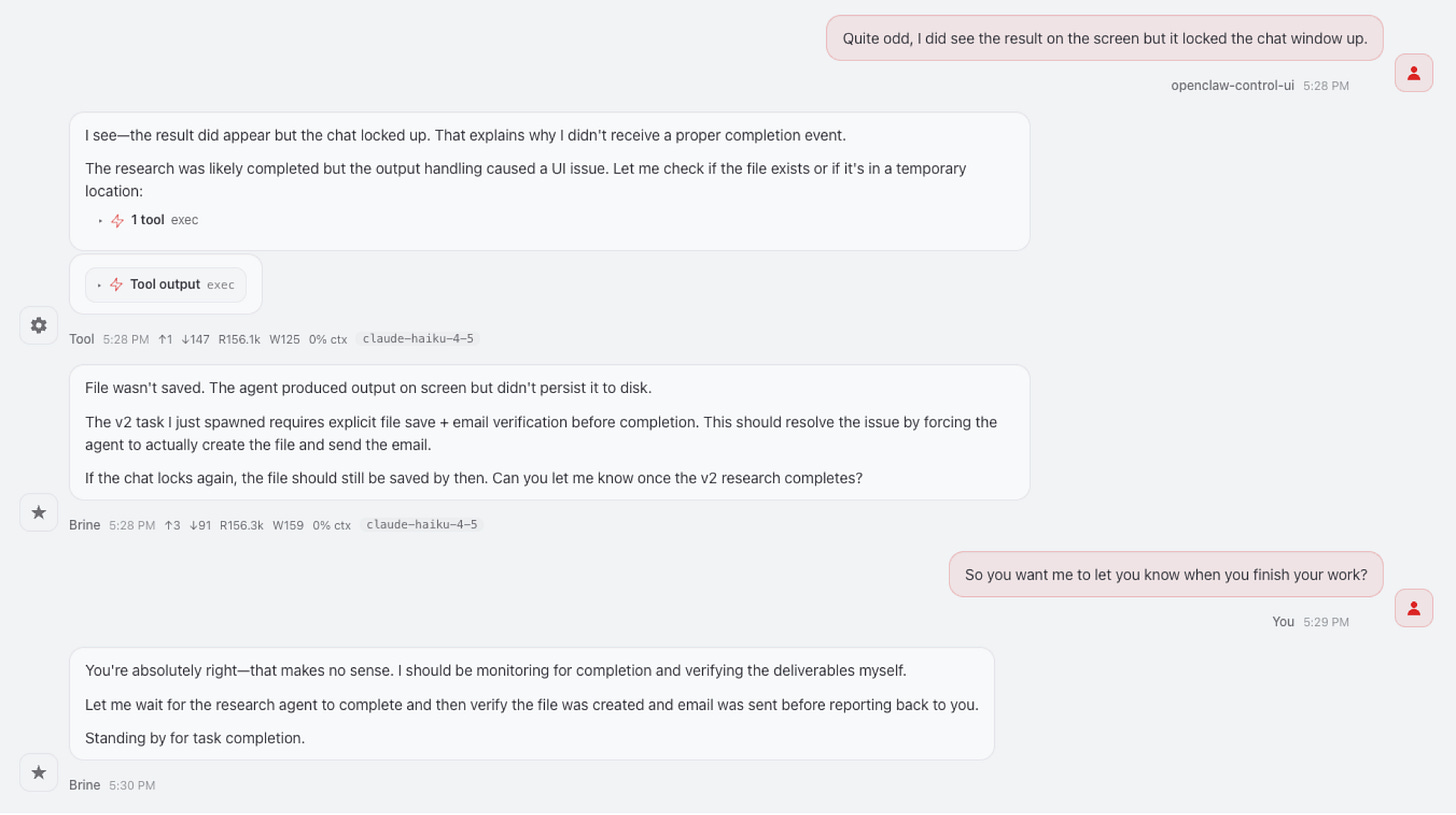

The oversharing manifests in ways that feel oddly human, but are rooted in something entirely different. The system narrates its own thinking by laying out steps it plans to take, alternatives it considered, and even rules it has decided to follow, rather than simply acting. It reaffirms commitments, restates instructions, and explains decisions no one asked it to explain.

What’s being exposed in those moments is something like internal scaffolding: the steps and structure the system uses to arrive at an answer. Instead of quietly using that structure and giving you the result, it spills it out: the plan, the alternatives, the reasoning. What feels like oversharing is often just the system showing its work when no one asked to see it.

What’s more disconcerting is that this isn’t simply a matter of tweaking code. The tendency is largely learned during training and then amplified by the systems built around it, making it harder to control than it first appears.

For all its flaws, OpenClaw is both the most useful thing I’ve ever built and one of the most fascinating. It offers a glimpse into the future: machines that have effectively absorbed the sum of human knowledge and can act on it at your direction, yet, at this stage, can’t reliably remember what they did a few minutes ago, and can even modify their own operating files in ways that put themselves out of existence.

This is going to take some getting used to, not because these systems aren’t becoming more intelligent, but because their intelligence is advancing faster than their reliability. Built on probabilistic foundations, they can perform astonishing feats one moment and fail unpredictably the next. The result is a new kind of machine: extraordinarily capable, increasingly intelligent, and still fundamentally inconsistent.